In this article, we will see how to extract structured information from web-page leveraging BeautifulSoup and CSS selectors.

Web pages are rendered by the browser from HTML and CSS code, but much of this information is not interesting when scraping a site and actually, make data extraction really difficult. BeautifulSoup allows us to easily access the information that we need by providing idiomatic ways of navigating, searching, and modifying the parse tree. Python Beautiful Soup Web Scraping Script (letslearnabout.net) Scraping Amazon Product Information With Python And Beautifulsoup (hackernoon.com) Quick Web Scraping With Python Beautiful Soup (levelup.gitconnected.com) Webscraping With Python Beautiful Soup And Urllib3 (dzone.com) Web Scraping Tutorial Python (dataquest.io). In this Web Scraping tutorial: Grasp Python Web Scraping fundamentals. Use BeautifulSoup & Requests to scrape & crawl Craigslist with Python. Learn how to save your scraped output to a CSV / Excel file. Understand the difference between Web Scraping Python libraries and frameworks. So let's start your Web Scraping real-life project. Scraping Is a very essential skill for everyone to get data from any website. In this article, we are going to see how to scrape images from websites using python. For scarping images, we will try different approaches. Method 1: Using BeautifulSoup and Requests. Bs4: Beautiful Soup(bs4) is a Python library for pulling data out of HTML and XML.

Web Scraping with Pandas and Beautifulsoup. APIs are not always available. Sometimes you have to scrape data from a webpage yourself. Luckily the modules Pandas and Beautifulsoup can help! Related Course: Complete Python Programming Course & Exercises. Pandas has a neat concept known as a DataFrame.

WebScraping with BeautifulSoup

Pulling the HTML out

BeautifulSoup is not a web scraping library per se. It is a library that allows you to efficiently and easily pull out information from HTML. In the real world, it is often used for web scraping projects.

So, to begin, we'll need HTML. We will pull out HTML from the HackerNews landing page using the requests python package.

Parsing the HTML with BeautifulSoup

Now that the HTML is accessible we will use BeautifulSoup to parse it. If you haven't already, you can install the package by doing a simple pip install beautifullsoup4. In the rest of this article, we will refer to BeautifulSoup4 as BS4.

We now need to parse the HTML and load it into a BS4 structure.

This soup object is very handy and allows us to easily access many useful pieces of information such as:

Targeting DOM elements

You might begin to see a pattern in how to use this library. It allows you to quickly and elegantly target the DOM elements you need.

If you need to select DOM elements from its tag (<p>, <a>, <span>, ….) you can simply do soup.<tag> to select it. The caveat is that it will only select the first HTML element with that tag.

For example if I want the first link I just have to do

This element will also have many useful methods to quickly extract information:

This is a simple example. If you want to select the first element based on its id or class it is not much more difficult:

And if you don't want the first matching element but instead all matching elements, just replace find with find_all.

This simple and elegant interface allows you to quickly write short and powerful Python snippets.

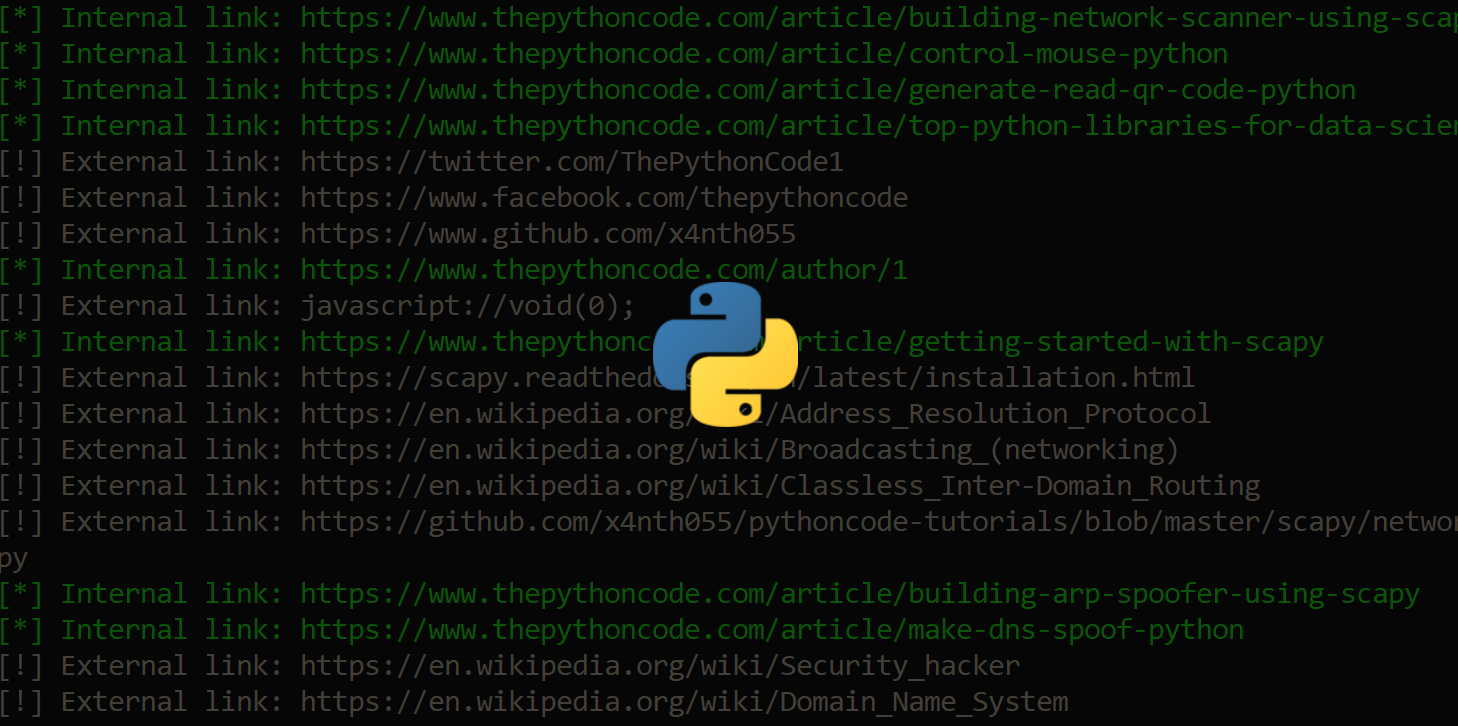

Web Scraping Python Beautifulsoup Github

For example, let's say that I want to extract all links in this page and find the top three links that appear the most on the page. All I have to do is this:

Advanced usage

BeautifulSoup is a great example of a library that is both easy to use and powerful.

You can do much more to select elements using BeautifulSoup. Although we won't cover those cases in this article, here are few examples of advanced things you can do:

- Select elements with regexp

- Select elements with a custom function (links that have Google in them for example)

- Iterating over siblings elements

We also only covered how to target elements but there is also a whole section about updating and writing HTML. Again, we won't cover this in this article.

Let's now talk about CSS selectors.

CSS selectors

Why learn about CSS selectors if BeautifulSoup can select all elements with its pre-made method?

Well, you'll soon understand.

Hard dom

Sometimes, the HTML document won't have a useful class and id. Selecting elements with BS4 without relying on that information can be quite verbose.

For example, let's say that you want to extract the score of a post on the HN homepage, but you can't use class name or id in your code. Here is how you could do it:

Beautiful Soup Web Scraping Python Interview

Not that great right?

If you rely on CSS selectors, it becomes easier.

This is much clearer and simpler, right? Of course, this example artificially highlights the usefulness of the CSS selector. But, you will quickly see that the DOM structure of a page is more reliable than the class name.

Easily debuggable

Another thing that makes CSS selectors great for web scraping is that they are easily debuggable. I'll show you how. Open Chrome, then open your developers’ tools, (left-click -> “Inspect”), click on the document panel, and use “Ctrl-F or CMD-F” to be in search mode.

In the search bar, you'll be able to write any CSS expression you want, and Chrome will instantly find all elements matching it.

Iterate over the results by pressing Enter to check that you are correctly getting everything you need.

Beautiful Soup Documentation

What is great with Chrome is that it works the other way around too. You can also left-click on an element, click “Copy -> Copy Selector”, and your selector will be pasted in your clipboard.

Powerful

CSS selectors, and particularly pseudo-classes, allow you to select any elements you want with one simple string.

Child and descendants

You can select direct child and descendant with:

And you can mix them together:

This will totally work.

Siblings

This one is one of my favorites because it allows you to select elements based on the elements on the same level in the DOM hierarchy, hence the sibling expression.

To select all p coming after an h2 you can use the h2 ~ p selector (it will match two p). You can also use h2 + p if you only want to select p coming directly after an h2 (it will match only one p)

Attribute selectors

Beautifulsoup Web Scraping Python Tutorial

Attribute selectors allow you to select elements with particular attributes values. So, p[data-test='foo'] will match

Position pseudo classes

If you want to select the last p inside a section, you can also do it in “pure” CSS by leveraging position pseudo-classes. For this particular example, you just need this selector: section p:last-child(). If you want to learn more about this, I suggest you take a look at this article

Maintainable code

I also think that CSS expressions are easier to maintain. For example, at ScrapingBee, when we do custom web scraping tasks all of our scripts begins like this:

This makes it easy and quick to fix scripts when DOM changes appear. The laziest way to do it is to simply copy/paste what Chrome gives you when you left-click on an element. If you do this, be careful, Chrome tends to add a lot of useless selectors when you use this trick. So do not hesitate to clean them up a bit before using them in your script.

Conclusion

In the end, everything you do with pure CSS selectors you can do it with BeautifulSoup4. But, I think choosing the former is the best way to go.

I hoped you liked this article about web scraping in Python and that it will make your life easier.

If you'd like to read more about web scraping in Python do not hesitate to check out our extensive Python web scraping guide.

Web Scraping With Python

You might also be interested by our XPath tutorial

How To Use Beautiful Soup

Happy Scraping,

Pierre de Wulf